The Growing Risk of Novel Cyber Physical Attacks via Social Engineering and its Implications for Technological Determinism

Master's thesis submission: Analyzing the conversion of social network users into living weapons utilized in mass manipulation attacks

The following was submitted as my master’s thesis:

DOI: https://doi.org/10.17918/00001352

Acknowledgements

I’d like to preface this research by acknowledging the inflammatory nature of topics such as the January 6th insurrection and the QAnon conspiracy. Both events have had enormous impact on the United States, the research contained in this paper does not justify nor defend the actions related to either topic to any degree. These events are mentioned exclusively to provide insight into a possible explanation of factors that lead to these outcomes in addition to clearly illustrating possible outcomes of mass manipulation attacks.

Abstract

The goal of this paper is to outline how social media has the potential to be utilized as a tool to mobilize radicalized groups and manipulate them into committing cyber physical attacks. The purpose is to bring attention to recent domestic terrorist attacks and social unrest and reframe them as results of cyber physical attacks. This paper covers the constantly growing social media userbase online resulting in an expanding resource pool for bad actors. This will include annual userbase growth, social media platform penetration rates, daily social media usage in minutes, and social media self-control failure. The growth of social media adoption may result in more polarized social networks as new users congregate with other users with similar interests and opinions. Online social network polarization will be examined including the causes of this polarization. Additionally, the radicalization of polarized online social groups will be analyzed along with tools created specifically to exasperate and expedite this process. Furthermore, this paper will examine the effects of social contagion in an online social network with consideration of the implications technological determinism has on that network.

Introduction

Technology creates ever-evolving tools with what appear to be impossible to predict impacts on society. Arguably the most revolutionary piece of technology ever made, the internet has forever changed the world we live in. While it was created as a tool for communication among the scientific community and the United States Defense Department it has become a new world for the world’s population to explore and manipulate to best reach their own goals. Most internet users utilize it for benevolent purposes, meanwhile bad actors take advantage of the opportunities provided by the interconnected network for nefarious purposes such as stealing money, identities, or information, along with a multitude of other malicious operations. However, not every malicious action is taken out by the bad actor themself, with social engineering techniques average internet users can be convinced to perform actions that they would not have under normal circumstances. Various social and psychological influences can impact the effectiveness of these social engineering techniques ranging from partaking in a polarized online environment to indulging in violent groupthink. These effects can spread from user to user with the psychological phenomena known as emotional contagion resulting in users acting abnormally on a massive scale. The potential for bad actors to take advantage of average internet users only grows as the collective internet userbase grows, resulting in a larger attack surface for these massive scale manipulation attacks.

Literature Review

The rapid growth of social media

Since the inception of social media with Six Degrees in 1996 social media usage has grown at a staggering rate globally with over half of the world’s 7.7 billion population contributing to the overall userbase. For the most accurate representation of active users and territory penetration numbers social networks report their growth data based on the number of monthly active users, MAU’s, rather than a quantitative measure of user accounts (Jones, 2022). In the last decade social network platforms have increased their userbase from 970 million MAU’s in 2010 to 4.48 billion MAU’s in July of 2021. To break that down, in almost half of that time there was an overall increase in userbase of 115.59% with the userbase being approximately 2.07 billion users in 2015 growing to approximately 4.48 billion in 2021, an average increase of 12.83% annually (Statista Research Department, 2022). Annual userbase increases were reported as:

2015: 2.078 billion active users

2016: 2.307 billion active users (+11% year-on-year increase)

2017: 2.796 billion active users (+21% year-on-year increase)

2018: 3.196 billion active users (+9% year-on-year increase)

2019: 3.484 billion active users (+9.2% year-on-year increase)

2020: 3.960 billion active users (+13.7% year-on-year increase)

2021: 4.480 billion active users (+13.13% year-on-year increase)

In the U.S. alone the total number of social media users has grown by 10 million between 2020 and 2021 (Dixon, 2022b). The U.S. has a social media penetration of 72.3%, which is calculated by the number of active social network users compared to the total population, more easily understood as a total of 231.47 million people. The average user in the US has approximately 7.1 different social media accounts with YouTube and Facebook being the two most popular social media sites, normally being visited daily (Dean, 2021).

Globally these high penetration rates can also be seen with 93.33% of all 4.8 billion internet users being active on social media and 85% of all 5.27 billion mobile internet users being active on social media as of 2021 (Dixon, 2022c). Country by country penetration rates can be as high as 99% such as in the U.A.E., with a global average of 49% regardless of age and a global average of 63% for eligible users aged 13+ (the industry standard minimum age for creating a social media account) as of 2020. The average social media user worldwide accesses 6.6 social media platforms on a monthly basis as of 2021. More specifically, according to the Global Web Index the average millennial or Gen Z-er worldwide has 8.4 social media accounts, an increase from 4.8 accounts in 2014. Average numbers of social media accounts can vary country by country from as low as 3.8 per user in Japan to as high as 11.5 per user in India. The continuous growth of multi-networking can most likely be attributed to two factors: widening of platform choice and increasing specialization of individual platforms such as LinkedIn for career and YouTube for video (Dean, 2021).

In addition to the growth of penetration rates and average number of social media accounts per user, the amount of time being spent on social media sites is also increasing. The 2020 Global Web Index clocks the Philippines as having the highest amount of time spent daily on social media out of the 46 countries studied with an average of 4 hours and 15 minutes per day per user and Japan having the lowest with 51 minutes per day (Dean, 2021). From 2015 to 2020 the year-on-year average for time spent on social media increased on average 5.748% from 111 minutes (about 2 hours) to 145 minutes (about 2 and a half hours) over the 5-year span (Dean, 2021). The annual breakdown for internet users from 47 studied countries, aged 16 to 64, on any device was:

Q3 2015: 111 minutes daily

Q3 2016: 128 minutes daily (15% year-on-year increase)

Q3 2017: 134 minutes daily (4.7% year-on-year increase)

Q3 2018: 142 minutes daily (6% year-on-year increase)

Q3 2019: 144 minutes daily (1.4 year-on-year increase)

Q3 2020: 145 minutes daily (0.69 year-on-year increase)

At this rate, after the age of 16 the average social media user will spend approximately 36.5 days (about 1 month 6 days) a year on various social media platforms. With an average life expectancy of 70 years old this will result in approximately 5.7 years of a user’s life devoted exclusively to social media.

With all the impressive growth Facebook emerges as the leading social media platform with 2.85 billion users out of a possible 4.48 billion social media users worldwide as of 2020 (Dixon, 2022). YouTube and WhatsApp follow with over 2 billion users each which are both also followed by Messenger, WeChat, and Instagram each having at least 1 billion users each. With the current number of users being so astronomical the most substantial opportunity for social networks to continue their growth is found in developing countries with millions of potential new users adopting modern technology, as was seen in Myanmar. The Pew Research Center documented this growing opportunity by reporting on certain developing countries and regions of which are continuing to increase their overall number of internet and smartphone adoptions (2022). The thinktank provided data in 2014 stating that globally a median of 42% of people accessed the internet occasionally with that figure growing to over 65% in another Pew study in 2017. Within that same period the global adoption of smartphone usage also doubled to nearly 42% (Pew Research Center, 2022)

An example of the new internet user growth can be found in Myanmar. The International Telecommunication Union reported that in 2001 until 2012 Myanmar had less than 1% of its population having access to the internet, that number later grew to 30% between 2017 and 2018 in another ITU report (2020). The rapidly decreasing cost of SIM cards may have been a large factor in the widespread adoption of cell phones, in 2012 the price of SIM cards had dropped from thousands of U.S. dollars to just hundreds. In 2014 SIM cards would drop in price even lower to approximately $1. These extreme reductions in price would allow for cell phones to become more affordable and in turn make the internet more easily accessible, by 2015 cell phone penetration in Myanmar had reached a height of 56% of the country’s population (Whitten-Woodring et al., 2020).

Facebook was able to capitalize on this market of potential users, with 40 percent of Myanmar citizens even listing Facebook as their primary source of news in 2018, but this growth did not come without consequence (Whitten-Woodring et al., 2020). Pre-publication censorship practices in Myanmar were ceased in 2012 and during that time Facebook had become synonymous with Internet for the people of Myanmar, it would also go on to become an important outlet for news (McLaughlin, 2018). Facebook had offered a “free basics” program between 2016 and 2017 which provided a limited version of Facebook for users that would take advantage of the offer (Lee, 2019). The Facebook free basics account provided access to basic Facebook features and did not require the user to have a cellular service payment plan, the user was able to access Facebook with or without having to pay for a mobile data plan (Lee, 2019). Unfortunately, without a healthy relationship established with social media platforms and with growing civil tensions Facebook may have caused more bad than good for the people of Myanmar. Facebook provided a news outlet to the Myanmar people that could supply diverse perspectives and access to previously censored information, however, it became clear that without a healthy relationship using the platform the Myanmar people instead would use it as a tool to amplify the animosity towards minority groups, especially the Rohingya. Facebook would prioritize expansion of the network and rapid growth rather than address the amplification of civil unrest, because of this there was a sharp increase in violence toward the Rohingya (Whitten-Woodring et al., 2020).

Failure to resist social media use

This raises a question: If social media is just a tool why won’t users stop using it when it becomes apparent that its use is detrimental in some way? One explanation may be Social Media Self-Control Failure (SMSCF), the tendency of an individual to use social media even when usage conflicts with an individual’s goals. It is common for social media users to come into argumentation with others holding opposing viewpoints in sports, music, entertainment, etc., this conflict leads to an increase in aggression in the user. Research shows that SMSCF leads to aggression in the user while also leading to aggression in the user’s network of connections (Hameed & Irfan, 2020).

Due to the constant availability of social media, users are unable to control their usage behavior(s) (Reinecke & Oliver, 2017). According to research by Hofmann et al. 42% of individuals fail to control their social media usage behavior (Hofmann et al., 2012). After much research, the desire to use social media presents itself as one of the most difficult desires to resist, SMSCF has been shown to be more prevalent than deficient self-regulation on social media, social media addiction, and problematic social media use (Du et al., 2018). Because of this inability to control one’s own behavior a user can have their social media use impact their goals and responsibilities leading to further frustration and aggression. Additionally, the SMSCF-scale measured a negative relation to user well-being and a positive relation to time spent on social media, resulting in users experiencing an increase in guilt due to usage control failure (Du et al., 2018).

SMSCF leads to an increase in social media use and an increase in social media use in turn leads to interaction with people of differing or opposing viewpoints on a variety of topics including politics. Interactions with users that hold opposing viewpoints increase the chance of provocation for a user, increased provocation causes further aggression for a user. This aggression is transmitted to the user’s social network with the social network also adopting this behavior after viewing the user’s (Hameed & Irfan, 2020). To become associated with a group users will sometimes adopt a level of group thinking to gain or maintain membership, leading to like-minded aggressive personalities in groups (Pavan & Felicetti, 2019). This level of aggression from groupthink can be identified as a higher stage of SMSCF (Hameed & Irfan, 2020). The adoption of behavior from one user to another varies from as low as rudeness to as high as physical assault (Foulk et al., 2016). This is also seen with children becoming more involved in violence while in connection with other aggressive children in their group (Dishion & Tipsord, 2011), this could lead to one explanation of why the growth of Facebook lead to the growth of violence and eventual genocide of Rohingyas in Myanmar.

In research from Hameed & Irfan the effects of SMSCF were studied specifically with the conclusion that users that encounter users who possess SMSCF are more prone to aggression and may have higher levels of aggression even if they do not personally practice SMSCF (2020). Findings suggested that SMSCF can spread throughout social networks via virtual interaction and may spread at a mass level and ultimately result in increased aggression in society as was seen in Myanmar (Hameed & Irfan, 2020). Hypothetically, because of the archival nature of social media it may be possible to trace these interactions to the originating users or user groups which spawned the aggression in the network. Du’s research has shown that users with high depletion sensitivity, the rate at which individuals’ self-control resources get depleted in response to self-control requiring conditions (Salmon et al., 2014), and low trait self-control are associated with higher scores on the SMSCF-scale (2018). With this information it is also safe to assume that there is a type of user that holds these attributes which may be naturally more susceptible to SMSCF.

Another explanation for why users will not or cannot stop using social media even after evidence of it being detrimental may be persuasive marketing tactics such as growth hacking. Growth hacking is a guerrilla growth-centered marketing tactic that involves science, data, and process to develop methods to maximize userbase growth (Ellis, 2010). It is a framework to spot methods for efficiently providing value for an implementor at scale and develop engagement and trust. Growth hackers work to find innovative ways to create ‘the right relationships’ faster (Fechter, 2021). These ‘right relationships’ are the connection of products to the right customers, customers that would be interested in the product or service. This relationship would include acquiring the userbase and identifying the proper channels to communicate with customers or potential customers (Lee, 2016).

Research has shown that growth hackers use three strategies to build right relationships: persuasion marketing, social responsibility, and cause marketing (Feiz, 2021). The two most relevant strategies for this paper being content marketing and persuasion marketing. Content marketing focuses on creating and distributing content to maintain a well-defined audience and conduct profitable customer behavior (Pulizzi, 2012). Based on Feiz’s research persuasion marketing mainly focuses on promotional marketing tactics to concentrate on customers’ impulsive behaviors and lead them to pre-planned pathways (2021). This takes advantage of user psychology by utilizing web page layout, typography, and promotional messages to direct them to pre-planned pathways on the website or to take specific actions (Feiz, 2021). These two strategies spawning from persuasive science begin to illustrate how certain websites including social media sites can persuade users to complete actions they normally might not by taking advantage of their psychology. This can further be seen in conversion marketing.

Growth hackers' ultimate goal is to optimize the conversion rate of a user, turning them from a browser to a purchaser of products or services (Holiday, 2013). Website design can also fall under the branch of conversion marketing, one company surveyed in Feiz and his team’s research paper reported:

“Having the support widget on the front page of the site is a must. It is required for a twoway interaction. You may need guidance as a user, and other methods do not work as efficiently” (Feiz, 2021)

These conclusions come from various methods of testing and analytics used to develop what methods beyond traditional marketing optimally suit products and services to their audience (Wilhelm, 2015). The Rauhala and Sarkkinen study received responses from two unnamed companies that described the data-driven, test-driven, and scientific methods used by their growth hackers (2015):

“We use Google Analytics and other Google Analytics tools such as Google Webmaster on our website. There are a number of other tools such as Moz that we use for SEO and its analysis.”

“We have a data collection and data mining system from users inside the site and other related media, and based on this information we decide how to design development approaches.” (Rauhala & Sarkkinen, 2015)

Having such a measured method of growth and analytics of data can help companies predict future trends and take ‘next steps’ more carefully due to the seemingly limitless insight gained from involvement of customers (Salemisohi, 2015). This could play a key role in explaining the draw Facebook was able to achieve in Myanmar. With the mindset of growth hacking and utilizing a method of A/B testing, former Facebook Head of Growth Chamath Palihapitiya was able to discover that getting an individual to 7 friends on Facebook in the first 10 days of having an account would convert them to a regular user (Palihapitiya, 2013). With the knowledge that growth hacking can provide insight on future trends along with knowledge on how to convert a potential user to a regular user it is fair to assume that Facebooks impact in Myanmar was intentional. This is further cemented by the opportunity for Facebook access regardless of cellular or internet plans. Not only do we start to see how companies like Facebook are willing to manipulate users to grow their user base but also the real-world effects that can take place when bad actors are able to disseminate their information to vulnerable groups of people online.

Formation of vulnerable groups online

Vulnerable groups can vary in size, cause of formation, composition of members, ideologies, along with a slew of other characteristics. For the purpose of this paper partisan blocking on social media sites as a cause of formation of vulnerable groups was examined. Over time, social media users can be subject to or create for themselves an environment that is homophilic after various amounts of partisan blocking. The work by Kaiser et al. showed that social media users are more likely to unfollow/unfriend or block other users whom they differ from politically rather than users they align with politically (2022). This persistent selective avoidance of opposing viewpoints due to partisan blocking over time can result in a polarized social network (Kaiser et al., 2022). Over time this will most likely impact user’s patterns of exposure to people, information, and epistemic norms by virtually surrounding themselves in echo chambers of similar opinions and experiences based on ideological lines (Newman et al., 2020). It is possible that this is one form of inception for vulnerable groups that may go on to be utilized in bad actors’ attempts for cyber physical attacks stemming from social media.

Research has shown that unfollowing/unfriending and blocking is not equally likely across all of a user’s social media connections but instead is more or less likely depending on the friend’s perceived political similarity to the user (Kaiser et al., 2022). Normally with a mindset of in-group favoritism and out-group exclusion users will be more likely to unfollow/unfriend and block politically dissimilar friends (Bode, 2016; Zhu et al., 2016). Kaiser’s team conducted research on the likelihood of someone blocking or unfollowing a friend specifically in relation to the spread of misinformation. The results of Kaiser et al.’s research showed that the political issue being shared was not what would lead to a misinformation sharer being removed from a user’s social network but instead their political similarity or dissimilarity (2022). Tests showed that politically dissimilar misinformation sharers were significantly more likely to be unfollowed or blocked than politically similar misinformation sharers by users, even when the same misinformation had been shared. Furthermore, tests indicated that dissimilar misinformation sharers are more likely to be unfollowed or blocked regardless of if the information shared is implausible or moderately implausible (Kaiser et al., 2022).

However, this method of forming a vulnerable group is not a one size fits all formula across all potential and actualized disgruntled groups of peoples. For example, based on Kaiser et al.’s work the process of building a homophilic group via the method of unfollowing or blocking was substantially stronger among left-wing misinformation receivers than right-wing receivers, who had no evidence of having this reaction at all (2022). By utilizing a continuous 6-point scale for misinformation receivers' ideology Kaiser’s team was able to conclude that only left-wing receivers showed a stronger propensity toward partisan unfollowing or blocking, this would become truer the more extreme the user fell on the 6-point ideology scale towards the left (Kaiser et al., 2022). Instead, right-wing social media users are more likely to become more homophilic due to backfire effects as shown in Bail et al’s work. This study revealed that when right-wing social media users are exposed to left-wing content they are more likely to exhibit substantially more conservative views, growing by 0.11 and 0.59 standard deviations on a seven-point political scale (Bail et al., 2018).

While these two methods of inception for a vulnerable group of peoples are quite different, they both result in the same outcome: a polarized homophilic group or community of people that share the same frustrations and ideologies. Kaiser’s team speaks to this by saying “Homophilic online networks may be useful for rapid mobilization among the like-minded", which often may be useful and effective for creating positive change, but then goes on to say, “communication researchers ought to pay greater attention to how the fine grain of technological design can matter for public life and have unintended consequences” (Kaiser et al., 2022). The possible positive impact of having a rapidly mobilizable group of like-minded people may be overshadowed by the potential of malicious bad actors taking advantage of these groups by mobilizing them into actions that could be seen as detrimental to society.

Radicalizing vulnerable social media users

The idea of using social media to radicalize vulnerable people and groups is not a new one, terrorist groups such as ISIS have done this for years via Facebook, Twitter, YouTube, chatrooms, blogs, and messaging systems, along with other social networking platforms (Purdy, 2015). ISIS has a relentless social media campaign aimed at English speaking individuals that provides access through a media familiar to the target audience who are seeking a path towards radicalization (Purdy, 2015). Their content glorifies the adventure and violence of their fighters and martyrs, manipulating internet users into joining their mission (Purdy, 2015). This process of radicalization can be seen with Major Nidal Hasan, the Ft. Hood shooter. He had exchanged communication with fellow American citizen Anwar al-Awlaki, an Al-Quaeda propagandist who created content to recruit individuals for jihad (Purdy, 2015).

One of the most recent examples of bad actors utilizing vulnerable peoples by manipulating them via social media is the recent QAnon conspiracy resulting in the January 6th insurrection. In May 2019, the Federal Bureau of Investigation published a report detailing the then increasing influence of anti-government, identity-based, or fringe political conspiracies on motivating criminal or violent activity (2019). The report would state that “based on the increase volume and reach of conspiratorial content due to modern communication methods, it is logical to assume that more extremist-minded individuals will be exposed to potentially harmful conspiracy theories, accept ones that are favorable to their views, and possibly carry out criminal or violent actions as a result” (Winter, 2019). The report then went on to emphasize a crowd-sourcing effect caused by the internet where “conspiracy theory followers themselves shape a given theory by presenting information that supplements, expands, or localizes its narrative” (Amarasingam & Argentino, 2020). This aspect separates the QAnon conspiracy from the Jihad recruitment style and even other far-right extremist groups (Amarasingam & Argentino, 2020). The user behind the QAnon conspiracy, Q, instead directed QAnon followers to determine their own interpretation and take action into their own hands (Amarasingam & Argentino, 2020). The QAnon conspiracy finds its roots on the website 4chan where it likely would’ve remained had two moderators, Coleman Rogers and Paul Furber, not intervened (Zadrozny & Collins, 2018). The two 4chan moderators had reached out to YouTuber Tracy Diaz to leverage her YouTube following by promoting various Q posts, this being the first instance of QAnon community members attempting to spread the conspiracy with the intention of convincing or manipulating others to join the community and eventually commit a group action.

Various criminal cases involving QAnon followers outlined how the QAnon conspiracy had contributed to the radicalization of ideologically motivated violent extremists. The FBI stated that conspiracy theories such as this could “very likely motivate some domestic extremists, wholly or in part, to commit criminal and sometimes violent activity” and that “one key assumption driving these assessments is that certain conspiracy theory narratives tacitly support or legitimize violent action” (Winter, 2019). With a following built to crowd source information to substantiate any claims made by a community that promotes or justifies violence, members may be manipulated or convinced to commit actions that they would not have under normal circumstances. This could be a possible explanation as to why someone such as Edgar Maddison Welch would enter the Washington, D.C. based pizza restaurant Comet Ping Pong with an AR-15 rifle and .38 revolver.

Amarasingam & Argentino examined Welch’s actions of entering a pizza restaurant with two guns on his person in addition to a loaded shotgun and ammunition in his car, all of which he brought in hopes of finding evidence of child trafficking taking place in the restaurant (2020). Following his arrest, evidence collected from his cell phone had indicated that Welch watched several YouTube videos outlining the details of ‘Pizzagate,’ a conspiracy theory theorizing that Comet Ping Pong was a hub for an elite cabal of child traffickers. These videos led to his vigilantism in addition to him admitting to having heard what he referred to as news reports of a child-sex trafficking ring located at the restaurant.

In the same vein Jessica Prim was arrested with 18 counts of criminal possession of a weapon after traveling from Illinois to New York City with a car full of knives threatening to kill Joe Biden for his alleged involvement in a deep state sex trafficking ring. The most alarming aspect of Prim’s radicalization is the speed in which it happened. She stated in a video she had posted to Facebook that her relationship with the QAnon conspiracy started when one of her clients recommended she watch Fall of the Cabal, a QAnon documentary by Dutch conspiracy theorist Janet Ossebaard. In approximately 20 days Prim had gone from unaware of the QAnon conspiracy theory to indoctrinated to the point of acting on radical threats of violence stemming from online videos containing claims of sex trafficking rings and deep state government attempts to control population (Amarasingam & Argentino, 2020).

Research has shown that a strong conspiracy mentality increases the likelihood of violent extremist behavior with an increasing likelihood in individuals with low regard for the law, high self-efficacy, and low self-control (Rottweiler & Gill, 2020; Rousis et al., 2020). Low self-control is linked to both potential for violent extremist behavior and SMSCF, meaning it may be a key characteristic for identifying an ideal candidate to manipulate as a bad actor. On a larger scale, groups where this characteristic is common, possibly enough for it to be a topic the group bonds over, have potential to be identified as a vulnerable group and fall victim to a mass manipulation attack. Individuals can accept conspiracy theories as explanations for complex events which often result in the singling out of enemies regardless of the theories being false or based on unsubstantiated claims. Mistrust in government, global shocks, and unexpected events are all additional push factors that can influence an individual to adopt a conspiracy accepting mindset (Hofstadter et al., 2021; van Prooijen & Douglas, 2017).

The QAnon community has an often-repeated slogan of “do your own research” which mirrors academia’s source citation and evidence presentation (Amarasingam & Argentino, 2020). Followers of this community often create presentations of evidence to substantiate their claims, resulting in those same followers self-identifying as investigative journalists (View, 2018). Because of this, followers may feel deeply invested in the community because it provides an outlet for them to develop a sense of self. These individuals often experience issues with their self-worth, sense of belonging, and self-esteem. Self-uncertainty such as this leads to strong attitudes towards social issues and stronger self-identification with important groups, inadequate sense of belonging causes individuals to seek it outside of their everyday lives in places occasionally like terrorist groups, gangs, and conspiracy theory communities (van Prooijen, 2015).

Once groups of individuals suffering from these issues form they can further suffer from group polarization and groupthink, two psychological phenomena that can lead to escalation of conspiracy theories, violent extremism, and even terrorism. When in a community of like-minded individuals' ideologies can be emboldened within the echo chamber and individual members may begin to make decisions based on what they believe other members are doing (Sunstein, 1999; McCauley & Moskalenko, 2008). Groupthink occurs when members of a group make decisions or take actions in a more extreme manner than they naturally would to conform to group norms, further cementing the opportunity for utilization of these groups as a form of manipulation (Janis, 1982). When the groupthink phenomenon becomes active members lose their ability to be open to alternative lines of thinking, which includes counternarratives and all evidence contrary to their beliefs (Janis, 1982). Regarding the attacks being discussed, groupthink would create a group of individuals willing to conform to the will of a bad actor with the intention of meeting group norms and expectations.

Amarasingam & Argentino of the CTCSentinel said in July 2020 that “If more individuals with greater organizational skills and operational acumen seek to pursue QAnon’s agenda, it could eventually lead to more significant threats to public security and become a more impactful domestic terrorism threat”, along with “The increased consumption and circulation of misinformation on social media, as well as its negative consequences, is evinced especially by QAnon, but its effects on public safety are not limited to it. The emergence of future (related or unrelated) conspiracy theories that may be effective at radicalizing individuals to terrorist violence should thus not be ruled out as threats to public security” (2020). With more effective organization and operational skills we can see these warnings come to fruition with these individual manipulation attacks culminating into one mass manipulation attack on January 6th, 2021.

Manipulation of social media users at scale

While some vulnerable groups are created organically through diverse types of individuals finding refuge in a community they can identify with to some degree, some are cultivated inorganically through the influence of bad actors. Nisos’ 2020 report outlined the information gained from the hacktivist group Digital Revolution on a botnet system of Internet of Things (IoT) devices created by a contractor by the name of 0day Technologies, this botnet is known by the codename Fronton (2022). What was initially thought to be a botnet created for distributed denial of service attacks was determined to be a system developed for coordinated inauthentic behavior on a massive scale to conduct next generation social engineering attacks. The report included that “Fronton is a system developed for coordinated inauthentic behavior on a massive scale. This system includes a web-based dashboard known as SANA that enables a user to formulate and deploy trending social media events en masse. The system creates these events that it refers to as Инфоповоды, “newsbreaks,” utilizing the botnet as a geographically distributed transport.” SANA, assumed to be an acronym for Social-media Analytical Scientific Apparatus when translated, is a system for the creation and management of social media accounts with the intention of posting disinformation on various social media platforms (Nisos, 2022).

The Kvant Scientific Research Institute published a report describing the disruptive power of social media and proposes methods for creating “social media waves” as a means of “spreading manipulative models in information spaces” or “a controlled method of information dissemination to a target audience” (Nisos, 2022). The report noted “information technologies used in social networks create the potential for authoritarian socialization and manipulative influence on a person; moreover.... they challenge the interests of public and state security.” This reinforces the idea that social media platforms can be utilized by bad actors to conduct mass manipulation social engineering attacks. Information on how to influence an audience by utilizing three methods of persuasion, logos, pathos, and ethos, was also listed in the report (Nisos, 2022).

The Nisos report describes a video file released by the Digital Revolution group showing the SANA interface displaying how potentially effective it can be as a manipulation tool with varying degrees of tuning (2022). Features of the system included newsbreaks, groups, behavior models, response models, dictionaries, and albums. The Nisos report gave the following descriptions to each aspect:

Newsbreaks track the occurrence of necessary messages at given sites and how to respond to them.

Groups implement flexible bulk management of bots.

Behavior Models set up background bot activity which allows bots to be indistinguishable from normal users.

Response Models describe how to react to messages found.

Dictionaries store and categorize phrasebooks, quotes, and comments for social media responses as positive, negative, and neutral influences based on topic. It also provides a location to store lists of names and surnames.

Albums allow the maintenance of photograph sets for platform bot accounts (Nisos, 2022).

Nisos reported that newsbreaks, events that create information noise around a brand or company and attract media attention, appear to be the core of the system. Based on the information from the Nisos report the SANA interface tracks shared link sources, shared links, release times, social media post text, and quantity of posts for all newsbreaks defined/created by the bad actor (2022). Newsbreaks appear to be an effective strategy for drawing attention to a topic of interest due to bad actors being able to create one at any time with little to no expense. After the bad actor configures their desired newsbreak, the system will mobilize at the specified date/time and deploy a group of artificial users to react positively, negatively, or indifferently using a predefined reaction model. Behavior models for the system and response models for newsbreaks are not a one-sizefits-all tool but instead they are both configured specifically to meet the user’s goals in different ways depending on the platform selected. The system allows the user to configure models for supported platforms which include social media sites, blog sites, and various forums. Additionally, this system can even account for two-factor authentication and similar text requests by taking advantage of an integrated SMS service supported via API by SANA (Nisos, 2022).

Impacts of social media in relation to technological determinism

The effectiveness of mass manipulation attacks can be traced back to various aspects of technological determinism, the idea that technology shapes social change (Drew, 2022). As this report has previously stated, users will accept simple answers to understand complex problems. Martin Hirst’s paper ‘One tweet does not a revolution make: Technological determinism, media and social change’ explores how this phenomenon can result in what he refers to as soft technological determinism citing the Arab Spring events of 2010-2011 as an example of this (2012).

In Hirst’s example he mentions how writer Michael Binyon wrote that the events being recorded began in the year 2011 and that “telephones in the hands of angry young men and women” broke the political stasis in Tunisia. However, this was not true (2012). Sameh Naguib argued that “The Egyptian revolution did not come out of thin air” meaning that this conflict did not erupt all at once when writers like Binyon became aware of it but instead was a breaking point after years of political unrest (Naguib, 2011). Binyon’s mistake could be chalked up to ignorance, but because of the nature of technological determinism his inaccurate information reached a large audience of people and without being challenged would have been adopted as the simple explanation for the cause of the complex issue of the events in the Arab Spring (Hirst, 2012).

Without clarification Binyon’s and other related stories would have been adopted as truth by masses and shaped what is referred to as the ‘first rough draft of history’ (Hirst, 2012). Creating a narrative that the Arab Spring events happened because men and woman had “suddenly understood the power of the new media (The Times, 2011)” reflected the west’s lack of historical knowledge on the topic while still attempting to provide an easy to digest narrative based on available facts without requiring difficult historical contextualizing for the media outlet’s audience (Hirst, 2012). Edward Tenner is credited with coining the term for this bias ‘the bias of convenience (Rosenberg & Feldman, 2009)’ which can develop from a necessity for speed of dissemination of information or linked to a certain level of groupthink amongst reporters (Tiffen, 1989). Technological determinism views technology as taking on a ‘life of its own’ as a driver of social phenomena (Hirst, 2012). With Hirst’s report we can see how technology is able to direct human behavior by providing an outlet for the misinformation of journalists and by being a vehicle for that information to be passed on to consumers via both targeted and non-targeted curations of news media. This parallels with mass manipulation attacks where news consumers’ thoughts and behaviors are shaped by inaccurate news reports and social media consumers’ thoughts and behaviors are shaped by coordinated misinformation campaigns. The impacts of technological determinism continue after a consumer has their thoughts influenced by misinformation in a social phenomenon known as emotional contagion.

Emotional states of people can be transferred to others through emotional contagion which causes those others to experience the same emotions (Kramer et al., 2014). With emotional contagion, emotions expressed by someone via online social networks can influence their friend’s moods via text-based computer-mediated communication regardless of verbal or nonverbal cues (Kramer et al., 2014).

The work done by Kramer et al. provides evidence that this phenomenon can happen on a massive scale through online social media platforms (2014). Coviello et al.’s research has shown that each positive post by a user yields an additional 1.75 positive posts among their friends and each negative post yields 1.29 negative posts among friends (2014). Additionally, these positive and negative posts have inhibitory effects on one another. Each positive post by a user decreases the number of a user’s friend’s negative posts by 1.80 and each negative post decreases the number of a user’s friend’s positive posts by 1.26 (Coviello et al., 2014). Ferrara & Yang’s research analyzed 3,800 users to find that 80% of the user set had up to 50% of their tweets affected by emotional contagion while the remaining 20% of users had greater than 50% of their tweets affected by emotional contagion (2015). The research conducted by Coviello et al. stated “These results imply that emotions themselves might ripple through social networks to generate largescale synchrony that gives rise to clusters of happy and unhappy individuals. And innovative technologies online may be increasing this synchrony by giving people more avenues to express themselves to a wider range of social contacts. As a result, we may see greater spikes in global emotion that could generate increased volatility in everything from political systems to financial markets,” this volatility could lead to physical consequences (2014).

Taking advantage of this volatility is not a threat posed only by bad actors but also by the owners of the social media platforms themselves. Features such as the Facebook News Feed, where content is either shown or omitted determined by a Facebook algorithm, could be used to influence people’s emotions (Kramer et al., 2014). The News Feed ranking algorithm is modified with the intention of showing viewers the content which they will find the most relevant and engaging but can also be adjusted to influence users’ behavior (Kramer et al., 2014). This was made clear by Tim Kendall saying “We often talked about, at Facebook, this idea of being able to just dial that as needed [by adjusting algorithms]. And, you know, we talked about having Mark have those dials. “Hey, I want more users in Korea today.” “Turn the dial.” “Let’s dial up the ads a little bit.” “Dial up monetization, just slightly.” And so, that happ– I mean, at all of these companies, there is that level of precision” (Coombe et al., 2020).

Bond et al.’s experiment involving a political mobilization message on Facebook was a proof of concept for the idea that Facebook itself can influence people to complete actions (2012). The team created two messages, a social message which included the images of friend’s faces that had interacted with the message themselves and an information message both relating to the 2010 US congressional election, providing a link to locate a voting location, and an ‘I Voted’ button to display that the user had voted. Selected users would receive one of the two messages as a banner above their Newsfeed. Results showed that users who had received the social message were 0.39% more likely to vote than users who had received no message at all. Just the addition of displaying friend’s faces on the social message made a similar difference of 0.39% increase in likelihood over the informational message. Through vote validation the team was able to conclude that a single message on Facebook, the social message, resulted in an increased turnout of 340,000 voters(Bond et al., 2012). With this study we can assume social media users, groups, and platforms themselves all have the ability to manipulate people on massive scales.

Technological determinism, an enabler for mass manipulation attacks

Mass manipulation attacks are an unintended secondary effect of having a global connection of people, the internet, enabled by the principle of technological determinism. Technology such as social media platforms can result in undesirable outcomes such as addiction to the platforms at such a level that it results in users developing anxiety and feeling restlessness when users are unable to check their social media accounts, as seen in 45% of British adults (Hilal Bashir & Shabir Ahmad Bhat, 2017). This anxiety can manifest itself in such extreme ways that users may develop Phantom vibration syndrome (PVS), the perception by an addicted user regarding the vibration of their cell phone, which is a symptom of obsessive social media use (Drouin, et al., 2012; Rothberg, et al., 2010). A study by Park, Song & Lee concluded that social media platforms such as Facebook are positively associated with acculturative stress of college students (2014). Time spent on Facebook is also positively correlated with depression for adolescents (Pantic et al., 2012). Studies have found that symptoms of major depression are found among the individuals who spend most of their time on online activities or performing image management on social media sites Rosen et al., (2013). Students who use Facebook often also suffer from enhanced senses of loneliness (Lou et al., 2012). The current youth is lonelier than previous groups, the loneliest ever recorded (Pittman & Reich, 2016). Loneliness is understood as " discrepancy among desired level and practical level of social contacts of an individual’s social life” (Hilal Bashir & Shabir Ahmad Bhat, 2017). Skues, Williams, & Wise reported that the more Facebook friends a student has the higher the level of loneliness that student reports (2012). These mental health issues are all in addition to the previously mentioned SMSCF, all of which demonstrate how this technology, social media, has impacted society in some negative ways.

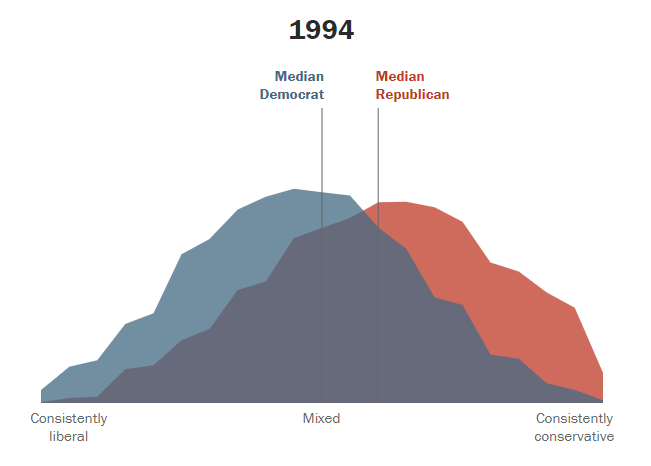

This paper has examined the secondary effect of social media of polarizing groups of people on smaller scales, specific online groups, specific message boards, etc., but this effect can also be seen on a larger scale. The effects can be seen in scales as large as politics as a whole as liberals and conservatives move further apart in policy positions. Figure 1 illustrates the overlap of political values of Democrats and Republicans in 1994, the purple area being policies that had overlap from both parties.

Figure 1 Political Polarization 1994. Pew Research Center. (2017). The shift in the American public’s political values [Graph]. Pew Research Center.

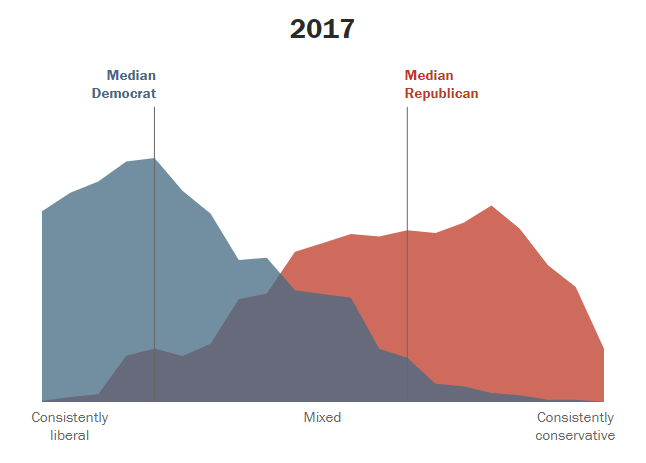

Figure 2 shows the values of these same parties in 2017 with a drastically different area of overlap between to two parties.

Figure 2 Political Polarization 2017. Pew Research Center. (2017). The shift in the American public’s political values [Graph]. Pew Research Center

The difference in these two figures also follows the timeline of the adoption of social media networks. Facing History lists in-group bias and media bubbles as two reasons for the growing polarization among political parties, both of which have been covered as results partially or entirely from social media platforms (Political Polarization in the United States, 2019). Social media sites can exasperate both of these issues, leading to animosity and vulnerability in followers of either party. The growing animosity can be seen with 4% of Republicans and 4% of Democrats reporting that they would be displeased if their child married someone of the opposite political party, with that number jumping to 45% of Democrats and 35% of Republicans as of 2019 (Iyengar et al., 2019). These feelings of animosity and vulnerability can continue to spread to all internet users through the phenomenon of emotional contagion.

All these things come together to explain how a social media site user could become compromised by the information found on those sites. In the case of a mass manipulation attack internet users become compromised systems to be exploited by bad actors. In instances such as the January 6th insurrection people, the compromised machines, became weapons utilized to complete a cyber physical attack on the United States Capitol Building.

Conclusion and discussion

While easily overlooked, all technology holds unpredictable unintended consequences. In the case of this research, social media platforms once created to ease the burden of and make more convenient the process of staying connected with friends, family, and acquaintances have grown into a national security threat due to their influential nature and design. Research is still actively being conducted to understand the full effects of social media self-control failure and how it can influence a person’s life and relationships. At the same time, researchers are still trying to understand the influence of emotional contagion via the internet and how impactful it can be to society and subpopulations. Evidence points to both phenomena being detrimental to the average person’s life without even considering the effects of the combination of both, and as the collective internet userbase grows so too will the impacts of its overuse. The resulting vulnerable groups that are formed in relation to these issues not only pose a threat to overall public health but also public safety as some of these groups morph into threats to national security. With all this information considered it becomes clear that the potential for bad actors to attempt next generation social engineering attacks through the method of mass manipulation attacks is both plausible and growing.

It is essential to attempt to understand these events with acknowledgement for where they originated. These events are not happening by happenstance but are a product of non-self-regulated social media use in combination with various psychological phenomena resulting in the threat of various forms of cyber physical attacks. Not all these events were intentional and may not have even stemmed from a bad actor, for example, the Myanmar genocide of the Rohingya people appears to have stemmed from negligence from Facebook prioritizing userbase growth over mitigating impacts on societal unrest but the more critical possibility of bad actors mobilizing their dedicated followers should be anticipated to minimize the success of their attacks. Incidents like the January 6th insurrection should be reframed from terrorist attacks to social engineering attacks to begin to develop strategies to identify potentially dangerous groups early and proactively protect civilians from potential danger. Once the perception of these attacks have been changed to results of malicious social media use researchers can begin to work towards developing solutions for the increasingly polarized and radicalized users currently on social media while also decreasing the probability that this issue will continue to grow with internet and social media growth.

Future research

Future research areas could attempt to understand how the addictive design of social media platforms such as Facebook increase the effects of social media self-control failure. While social media platforms most likely create these designs to maximize user session time it can be hypothesized that the addiction to social media sites increases the userbase’s negative emotions such as depression and anxiety. These negative emotions may lead to a vulnerable population which can be taken advantage of while seeking self-worth and validation and possibly grow into a national security threat. It may be beneficial to compare people with and without addictive personalities to better understand how social media platform designs can impact society. Along with platform design impacts of algorithm designs should also be explored. It can be hypothesized that people looking for sense of self and to build self-worth could strongly identify with exclusively one interest and build their sense of self solely around it. With algorithms optimized for presenting content that maximizes user engagement users may be molded into personalities comprised of a singular interest that they identify with resulting in a ‘one dimensional personality’ rather than forming a more well-rounded multifaceted sense of self.

Additionally, future research could examine the impacts parasocial relationships, one-sided relationships, where one person extends emotional energy, interest and time, and the other party (the persona) is completely unaware of the other’s existence (Parasocial Relationships: The Nature of Celebrity Fascinations –, 2020), have on people and to what extent those relationships can influence an audience or audience member’s behavior. It is possible to hypothesize that a parasocial relationship initiated by a bad actor could provide a vehicle for that bad actor to intentionally build an audience with ideal personality traits to be manipulated. This research could also attempt to outline a repeatable process for how to form vulnerable groups and how to build the necessary trust to convince audiences both large and small to act irrationally. Coviello et al.’s research provided evidence that effects of emotional contagion could plausibly be stronger when subpopulations are geographically defined (2014). If the physical geography of a subpopulation could increase emotional contagion effects, then it would be worth exploring if virtual subpopulations such as subreddits, Facebook groups, Discord channels, etc., could also have an impact on the effectiveness of emotional contagion. If this were to be true it could create a compounding effect when an influencer’s audience becomes vulnerable due to the development of a parasocial relationship in combination with spending substantial amounts of time in virtual subpopulations where exaggerated emotions would spread rapidly. Because of this, future social media influencers may become national security threats or possibly instead bad actors will generate cyber physical attacks by masquerading as social media influencers.

References